Edge AI for Biome Classification

Work in progress, this post is currently being written and will be completed soon.

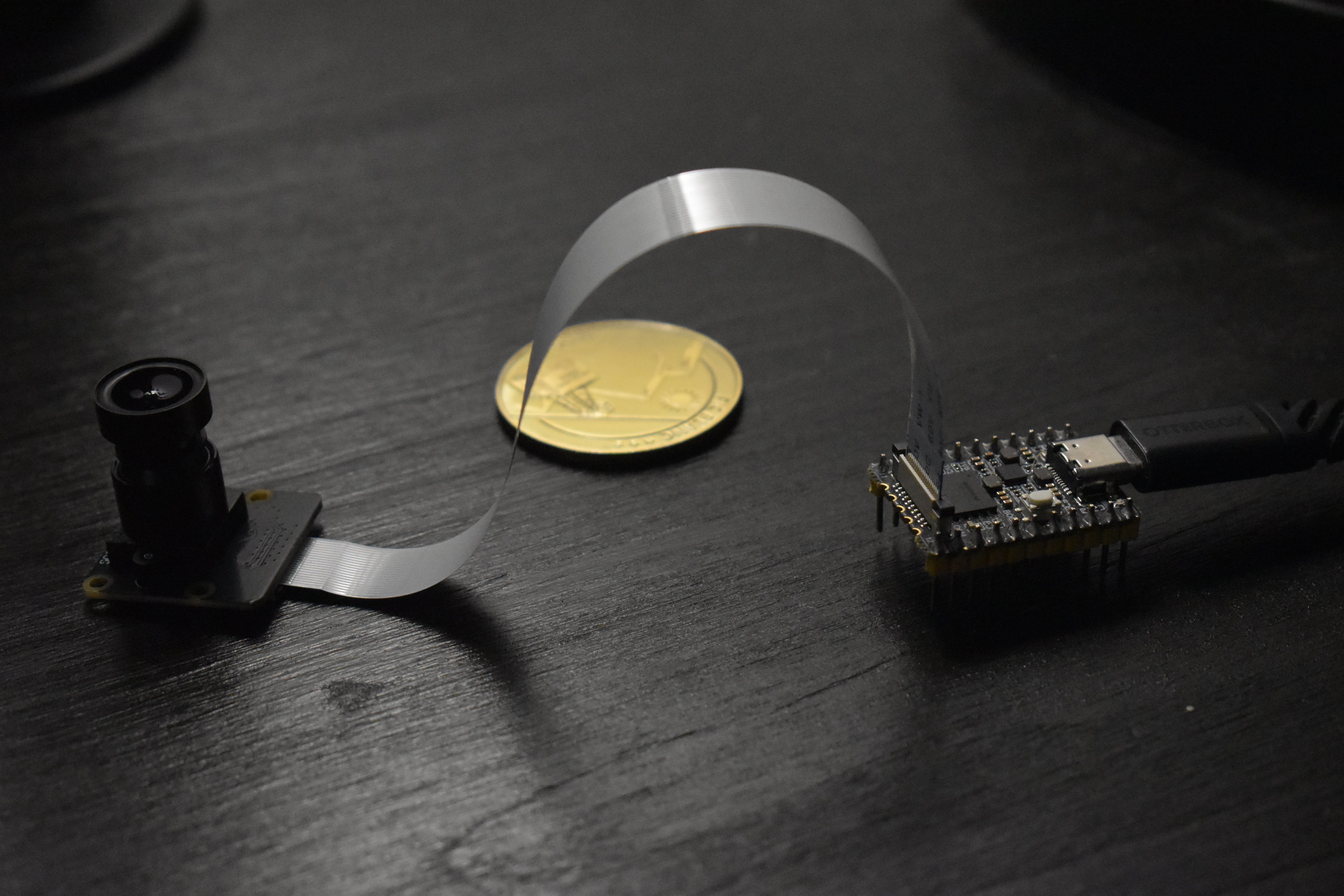

The goal of this project is to classify biomes (forest, urban, water, etc.) using a camera and a small embedded computer. Why? Because I have a Luckfox Pico board with an RV1103 SoC and a CSI camera lying around, and I want to learn how to build real-time edge AI systems. Later, this could be useful as a vision module for my autonomous flying wing project.

The RV1103 is a System-on-Chip (SoC) that includes a single-core ARM Cortex-A7 processor and a built-in NPU (Neural Processing Unit) capable of 0.5 TOPS (Tera Operation per Seconds). This makes it suitable for lightweight AI inference on the edge, without needing a cloud connection. It runs Linux and supports common interfaces like CSI for camera input, making it a good low-power platform for experimentation.

In this first part, I focus on setting up a working AI pipeline: capturing frames and (try) running inference and visualizing the output.

Setting up the environment

Connected to RV1103 via SSH over USB, the camera is successfully recognized, an rkipc.ini file has been generated :

[root@luckfox root]# ls /userdata/

ethaddr.txt image.bmp rkipc.ini video0 video1 video2The rkipc.ini file is the main configuration file used by the Rockchip IP Camera (RKIPC) service running on the SoC. It controls how video, audio, ISP (Image Signal Processor), and encoding pipelines are initialized. It includes settings for video resolution, frame rate, encoding format (e.g, H.265), image enhancement, exposure, and more. The parameters are used to configure the hardware and drivers at boot time or when the camera service starts.

Modifying this file allows for tune the system for our specific use case for example maybe reducing resolution to increase inference speed.

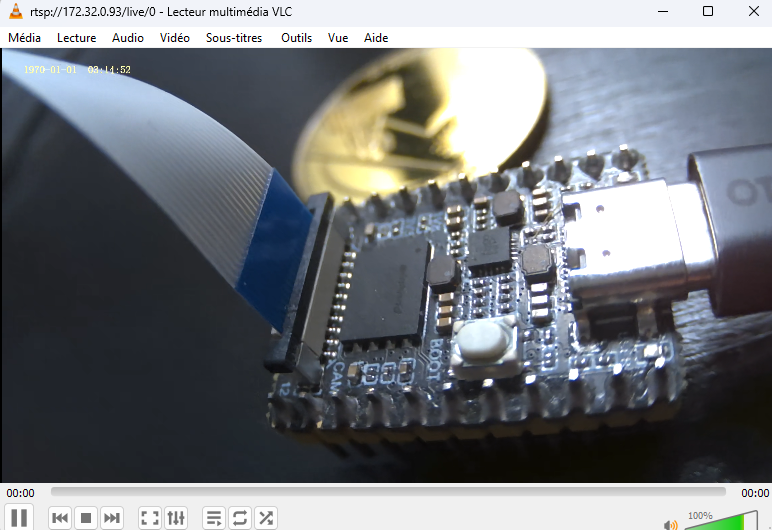

We can check that the camera is working via network video stream :

Work in progress…